How Can This Framework Help Your Business?

The LLM Security Framework for Enterprise AI provides a structured way to assess your organization’s AI exposure, prioritize risks by business impact, and define clear ownership for mitigation. In minutes, you can identify where your systems are vulnerable and what requires immediate action.

Download the framework to move from general awareness to controlled, accountable execution.

Download the LLM Security Framework for Enterprise AI

Why LLM Security Is Crucial for Enterprises

In 2026, LLMs are embedded in customer support, internal knowledge systems, and automated workflows — often outpacing formal governance.

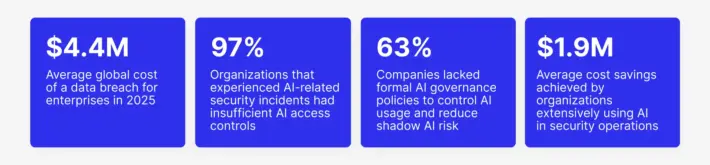

The financial exposure is significant. According to IBM, the global average cost of a data breach is $4.4M, even after a 9% decrease driven by faster detection and containment. IBM also reports that 97% of organizations that experienced an AI-related security incident lacked proper AI access controls.

Recent cases include source code leakage, legally binding chatbot errors, and AI agents exporting sensitive data without approval. These are operational realities — and without structured governance, the downside risk can quickly outweigh the expected ROI of AI initiatives. LLM security protects margin, reputation, and strategic investment.

According to the IBM report

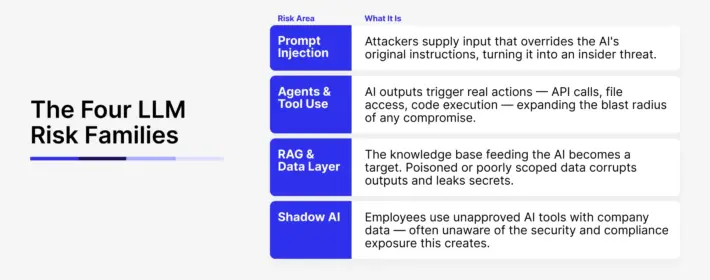

The Four Critical LLM Risk Families

Enterprise LLM exposure is multi-dimensional. Each risk family requires different controls and oversight.

Prompt Injection

Attackers override AI instructions by embedding hidden commands in emails, documents, or web content. Because prevention is never absolute, systems must be designed to limit and contain compromise.

Agents & Tool Use

AI outputs trigger real-world actions such as API calls, database writes, emails, or system updates. If compromised, an agent can escalate privileges or move laterally across systems without direct human oversight.

RAG & Data Layer

The knowledge base feeding the AI becomes a target. Poisoned or poorly segmented data can corrupt outputs and expose proprietary information at scale.

Shadow AI

Employees use unapproved AI tools with company data. Without governed alternatives, this creates invisible compliance gaps and unmanaged data exposure across departments.

The LLM Risk Assessment Matrix

Most organizations acknowledge AI risk. Few can quantify it.

The Risk Assessment Matrix in our framework translates abstract exposure into a clear executive risk profile. Within minutes, leadership can evaluate likelihood and business impact across four risk areas and identify where immediate attention is required.

Instead of broad security discussions, you gain structured prioritization based on business consequences — operational disruption, regulatory exposure, and data breach risk. It provides a concrete foundation for investment decisions and board-level conversations.

The 90-Day LLM Security Action Plan

The framework includes a structured 90-day roadmap aligned directly with the four risk families.

It defines ownership across AI/ML engineering, security, data, DevOps, IT governance, and HR. Controls are sequenced logically — from assessment and containment to monitoring and governance — removing ambiguity around responsibilities and timelines.

The result is clarity and accountability. Rather than isolated technical patches, the plan connects each risk area to defined controls, accountable owners, and measurable oversight. It shifts AI security from reactive fixes to managed execution.