In our guide to deploying AI in 2026, we outlined how to choose the right operating model – Buy, Build, or Hybrid – based on your competitive position, data maturity, and regulatory exposure. This article addresses the question that inevitably follows that decision: how do you know if it’s working?

Here we’ll start with a controversial point: most companies measure the wrong things, in the wrong order, for the wrong audience when it comes to AI. The good news is that it is possible to build a measurement system that captures value as it happens. And Sombra’s AI Lab is structured to help enterprises get this right before they spend another quarter in pilot purgatory.

For organizations exploring implementation, our AI software development services and gen AI development help translate AI initiatives into measurable financial outcomes.

The Importance of AI ROI in 2026

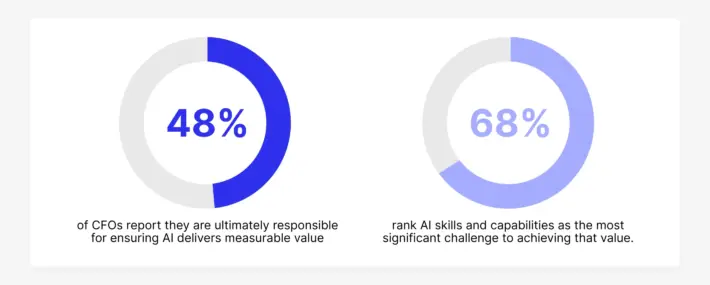

Measuring AI ROI is no longer optional. As AI budgets grow and CFO accountability increases, organizations must prove financial impact — not just technical success. Without clear ROI measurement, even successful AI initiatives risk being deprioritized, while ineffective ones continue consuming resources.

The Measurement Problem – Why Most AI ROI Is Invisible

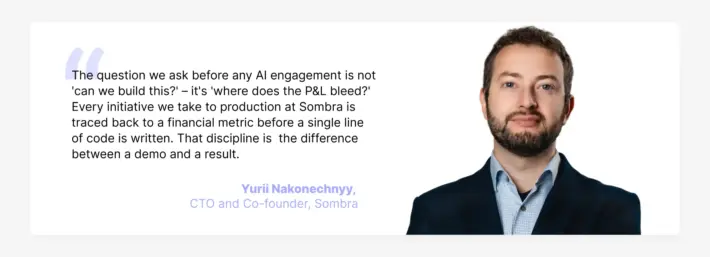

When organizations ask ‘What’s the ROI of our AI?’, they usually get one of two answers: either a confident number that nobody can actually trace back to the P&L, or a vague gesture toward productivity gains and employee satisfaction scores. Both are useless to a CFO.

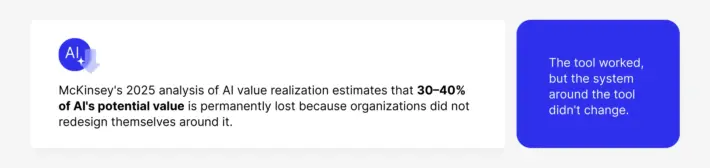

The productivity-gains story dominated enterprise AI narratives in 2023 and 2024. The logic seemed sound: if a coding assistant saves developers 20% of their time, that’s a 20% reduction in labour costs or a 20% increase in output. Vendor pitch decks were full of time-saving calculations. Boards approved budgets based on how strong these calculations were.

The problem is that ‘time saved’ does not appear on a Profit & Loss statement unless it is explicitly captured through operational restructuring. Saving ten minutes per person per day across a thousand employees is statistically significant but operationally invisible. You cannot reduce headcount by fractional percentages. Unless workflows are reorganized to aggregate these micro-gains – combining roles, eliminating process steps, reducing overtime – the labor cost line remains flat while the software cost line rises.

Source: McKinsey’s 2025 analysis

The Common Pitfalls in Measuring ROI of AI

Most companies measure activity instead of outcomes. Metrics like time saved, number of prompts, or tasks completed rarely translate into financial impact. Another common mistake is failing to isolate AI impact from other changes or skipping baseline measurements entirely.

To avoid this, organizations must align metrics with financial outcomes, establish control groups, and define success criteria before deployment. Without this discipline, AI ROI remains speculative rather than provable.

The Four Key Components of AI ROI Measurement

CFOs speak in three currencies: margin expansion, cost reduction, and revenue growth. An AI investment must be translatable into at least one of these within a defined timeframe to survive budget scrutiny.

Source: January 2026 RGP survey of senior finance leaders

The measurement framework for finance needs has four components:

- Baseline first. Before deploying AI into any workflow, document current performance: cost per unit processed, time per transaction, error rate, headcount allocated, or revenue per account touched. This baseline is the denominator of every ROI calculation.

- Isolate the change. When AI is deployed, separate its effects from concurrent changes in staffing, tooling, or market conditions. This requires a control group or a structured rollout design where comparable teams have and have not yet received the intervention.

- Measure the financial output, not the AI output. The unit of measurement is not ‘queries handled’ or ‘content pieces generated.’ It is ‘cost per customer interaction,’ ‘gross margin on AI-assisted deals versus non-assisted deals,’ or ‘reduction in accounts payable processing cost as a percentage of invoice volume.’

- Time-gate the claim. A 45-day pilot that shows promising signals is not an ROI claim. ROI requires a measurement window long enough for the change to stabilize in production – typically 90 to 180 days minimum for operational workflows.

The Anatomy of ROI: Where AI Value Actually Lives

After working with enterprises across financial services, energy, healthcare, logistics, and retail, Sombra AI Lab has identified three distinct zones where AI generates value that can be tracked against P&L outcomes.

Zone 1: Cost Arbitrage – 30 to 90 Days

This is the zone most enterprises should start in. It targets high-volume, repetitive, knowledge-heavy workflows where AI replaces linear human labor costs with elastic compute costs. The financial logic is direct: a human agent handling insurance claims or invoice exceptions costs $30–50 per hour in fully loaded costs. An AI agent handling the same workflow at scale costs a fraction of that – and the cost is variable, not fixed.

The key requirement is volume. Cost arbitrage only works when there is sufficient transaction volume for the fixed cost of AI deployment to be amortized quickly. A workflow processing 50 transactions per month is not a cost arbitrage opportunity. A workflow that is processing 50,000 transactions is.

Some documented AI benchmarks from 2025–2026 production deployments:

- AI-assisted invoice processing in financial services has reduced cost per invoice by 40–65% in production environments.

- AI agents handling first-line customer support resolve 60–70% of inbound queries without human intervention, reducing cost per resolution by 35–50%.

- In legal and compliance document review, AI reduces review time per document by 70–80%, with direct implications for billing cost or internal team capacity.

Zone 2: Revenue Enhancement – 60 to 180 Days

This zone is harder to attribute but carries a larger upside. AI creates revenue leverage when it improves the quality or speed of decisions that affect sales outcomes: lead qualification, pricing, proposal generation, or customer retention.

Bain & Company’s 2025 technology report documents consistent improvements in win rates of 15–25% among sales teams using AI-assisted pipeline management. AI increases the number of accounts a relationship manager can actively manage – not by working faster, but by offloading research, CRM updates, and follow-up drafting. The revenue impact is measured in pipeline conversion rate and deal size, not in time saved.

Customer retention is the second major lever. AI systems that identify early signs of churn and trigger personalized outreach have demonstrated 10–20% reductions in churn rates in sectors with high-value, recurring contracts. At a unit economics level, preventing one enterprise customer from churning is worth tens or hundreds of thousands of dollars. Connecting that outcome to AI activity requires a CRM that logs AI touchpoints and a revenue operations team that tracks the downstream result.

Zone 3: Structural Advantage – 6 to 18 Months

This is the zone where AI stops being a cost or revenue tool and becomes a competitive differentiator. BCG’s September 2025 ‘Widening AI Value Gap’ report documents that ‘future-built’ organizations – those that have crossed from experimentation into scaled production – are generating 3.6 times the total shareholder return of their peers. The mechanism is compounding: every quarter of production-scale AI operation generates proprietary data, institutional learning, and operational capability that widens the gap.

This zone cannot be accelerated by buying more software. It requires the discipline of Zones 1 and 2: documenting what works, operationalizing what scales, and building measurement infrastructure that accumulates signal over time.

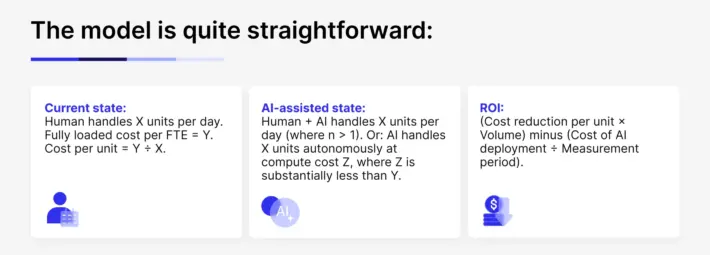

The Unit Economics Model

The most reliable way to translate AI value into financial language is through unit economics – the cost and revenue associated with a single unit of production: a transaction, a customer, a decision, a document.

This model reveals that AI ROI is volume sensitive. Low-volume workflows will rarely show positive unit economics within 12 months. High-volume workflows often show positive ROI within 60–90 days of stable production deployment.

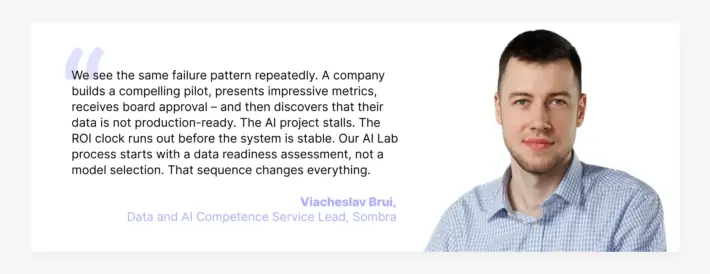

It also reveals the hidden cost that destroys most ROI calculations: data preparation. Analysis of enterprise AI deployments consistently shows that 60–80% of the time and cost of an AI project is spent on data – cleaning, labelling, structuring, and integrating. This cost is rarely budgeted correctly in the initial business case. Here, see Sombra’s piece on the operational cost of low-trust data for a detailed breakdown of how data quality problems compound once AI is in production.

The AI Operating Model – Structuring for Provable Returns

One of the most consistent findings across enterprise AI research is that the technology itself is rarely the variable that determines success. Tools like GPT, OpenClaw, or Claude are available to every organization. The differentiator is the operating model used to deploy, measure, and scale initiatives (by the way, you can find the full architecture of enterprise AI operating models here).

If we look at ‘AI high performers’ organizations, they have four common organizational practices. They’re redesigning workflows rather than overlaying AI on existing ones; investing the majority of AI resources in people and process change rather than software; establishing clear financial accountability for AI outcomes; and shutting down failing projects quickly rather than allowing them to consume resources indefinitely.

This is where we recommend starting from an operational standpoint as well.

The Sombra AI Lab Methodology: Research Before Execution

In practice, most enterprise AI projects begin with a technology or a vendor and work backwards to a use case. The result is what researchers call ‘AI Theatre’ – initiatives that generate compelling demos and dissolve in production. The AI Lab inverts this sequence through five structured phases.

Phase 1: Business Problem Identification

Identify the 5–10 workflows where AI could generate the highest financial impact. Prioritization criteria: transaction volume (higher is better), current cost per unit (higher is better), error rate and its downstream cost (higher is better), and degree of structure in the underlying data (more structured means faster to deploy). This phase produces a prioritized use case map with preliminary financial impact estimates – the document that goes to the CFO, not a technology roadmap.

Phase 2: Economic Validation

Before any development begins, define the ROI model for the top priority use case: what is the baseline, what is the target, what is the measurement method, and what are the ‘kill criteria’ – the thresholds below which the project is shut down. Kill criteria are the discipline mechanism that prevents Pilot Purgatory. Every project charter includes a minimum adoption rate at Day 45 (below 40% signals a deployment problem), a maximum error rate after three tuning iterations (above 10% signals a model-fit problem), and a maximum cost per transaction relative to the human baseline (above 80% signals the unit economics don’t close).

Phase 3: PoC with Defined Success Metrics

The proof of concept is built against the economic model, and success is defined in financial terms before the PoC begins. Sombra’s AI Accelerator compresses this phase by providing reusable components – RAG pipelines, agent frameworks, evaluation tooling – that allow a working PoC to be validated in two to three weeks rather than six to eight. For example, we delivered a custom RAG solution in approximately two weeks using the Accelerator while preserving architectural flexibility.

Phase 4: Production and Measurement Infrastructure

The transition from PoC to production is where most projects fail. The production system requires monitoring that tracks both financial outcomes and technical performance. This includes cost-per-transaction dashboards visible to finance, anomaly detection that alerts when AI outputs deviate from acceptable ranges, and a human-in-the-loop escalation path for edge cases. Context engineering – designing the information environment in which the AI operates – is a critical and often overlooked element of production stability.

Phase 5: Governance and Scale

Once a single use case is in stable production with documented ROI, the pattern is replicated. The AI Lab accumulates organizational capital, including documented deployment patterns, evaluated model performance data, integration templates, and a growing body of institutional knowledge that facilitates the faster and cheaper deployment of the next use case.

How to Get Started: Practical Steps to Ensure AI ROI Success

Start with high-impact workflows, define financial success upfront, and validate assumptions early. Focus on measurable outcomes, not experimentation. Establish governance and ensure every initiative has clear ownership, baseline metrics, and defined timelines.

Identifying High-Impact Workflows for AI

Focus on workflows with high volume, high cost per unit, and measurable outputs. Prioritize processes where inefficiencies directly impact financial performance, such as customer operations, finance, or sales support. These areas offer the fastest path to visible ROI.

Economic Validation and the Importance of ‘Kill Criteria’

Before building anything, define expected ROI and thresholds for success. Kill criteria ensure that underperforming projects are stopped early, preventing wasted investment. This discipline is critical to avoiding pilot purgatory and maintaining focus on high-value initiatives.

ROI Benchmarks by Function – What ‘Good’ Looks Like in 2026

To give you a practical reference point, here are ROI benchmarks from production of AI deployments across enterprise functions. Some figures come from the market, while others reflect our own observations from client projects.

Finance and Accounting

AI in accounts payable processing reduces cost per invoice by 40–65% in high-volume environments (>5,000 invoices per month). Invoice cycle times drop from days to hours. Error rates decline because AI applies validation rules consistently, without the variability introduced by manual review. Fully loaded payback period: 6–12 months.

Customer Operations

AI-powered first-line support resolves 60–70% of inbound queries without human escalation in mature deployments. Cost per resolution drops 35–50%. Human agents shift to complex and high-value interactions. CSAT scores improve because resolution is faster and more consistent. Payback period: 3–9 months for contact centers with high inbound volume.

Sales

AI-assisted pipeline management increases win rates by 15–25%. Relationship managers actively manage 30–40% more accounts. Proposal generation time decreases by 60–70%. Revenue impact is measured as incremental deal value and improvement in conversion rate – not time saved. Payback period: 6–12 months, with ongoing upside that compounds as the AI learns the customer base.

Software Engineering

AI coding assistants reduce time to first working version by 30–50% in controlled studies. The ROI is highest when time-to-market has revenue implications (competitive markets, seasonal demand, contract milestones) and lowest when developer capacity is the bottleneck rather than speed. For enterprises building software products, this is one of the fastest paths to a positive ROI calculation.

Legal and Compliance

AI contract review reduces review time per document by 70–80%. In legal teams billing by the hour, this compresses the internal cost of review. In compliance functions, AI-assisted regulatory scanning reduces the time required to assess the impact of regulatory changes from weeks to hours – with direct implications for fine avoidance and audit readiness.

Data and Analytics

AI-assisted data engineering reduces pipeline build time by 30–60%, based on Sombra’s data analytic services. More significantly, AI enables smaller data teams to maintain larger and more complex data architectures – compressing the talent cost of operating a modern analytics stack without sacrificing capability.

The Cost of Not Measuring — and the Cost of Not Acting

When AI ROI is not measured properly, two things happen. First, successful projects are shut down because they cannot demonstrate value to finance in a language that finance understands. Second, failing projects survive because there is no framework for terminating them. Both outcomes destroy value — one by eliminating what works, the other by preserving what doesn’t.

The Cost of Inaction

For the CFOs reading this: the cost of a well-run AI program that delivers 200% ROI is visible and controllable. The cost of competitive disadvantage accumulating quarter over quarter is neither visible nor controllable.

BCG’s ‘future-built’ cohort is generating 3.6 times the total shareholder returns of their peers. That gap is widening every quarter. It is generated by organizations that started the measurement discipline earlier, built governance infrastructure more carefully, and scaled what worked more systematically.

The “World Economic Forum analysis of CFO AI investment makes the same point: the organizations capturing AI value are not the ones that spent the most. They are the ones who measured and governed most rigorously.

To move forward, we must anchor every AI initiative to clear financial outcomes, defined timelines, and explicit kill criteria. Ensure your AI investments are held to this standard to maximize value and minimize risk.

Strategies to Improve AI ROI

Improving AI ROI requires both technical and operational discipline. Organizations should redesign workflows instead of layering AI onto existing processes, ensure strong data foundations, and align teams around financial outcomes.

Equally important is governance — defining ownership, tracking performance, and shutting down underperforming initiatives early. Companies that succeed treat AI as an operating model, not a tool, continuously optimizing based on measurable results and scaling what works.

How Sombra Delivers Provable AI Returns

Sombra’s AI Lab is structured around a single commitment: no project enters production without a defined financial outcome, a measurement infrastructure to track it, and a governance framework to protect it.

The AI Lab combines three capabilities that typically exist in separate silos inside enterprise organizations: business problem diagnosis (where AI can move the P&L), engineering execution (building systems that work in production), and measurement architecture (dashboards, monitoring, and reporting that translate AI performance into financial language).

For organizations earlier in their AI journey, the AI Accelerator reduces the time from validated use case to working PoC using reusable components built from previous deployments. This compression reduces the cost of validation, which means organizations can test more hypotheses at lower risk before committing to full production investment.

For organizations that have already deployed AI and are struggling to demonstrate value, Sombra’s engagement typically begins with a measurement audit: reviewing what is being tracked, identifying the gap between technical metrics and financial outcomes, and redesigning the measurement infrastructure to produce the numbers the CFO needs to see.

You can review examples of this work across our case studies, including a post-M&A bank integration that generated annual run-rate savings equal to 55% of the stated target, and a data engineering solution that cut costs by 4x while enabling real-time reporting.

The goal is to move the organization from ‘we have an AI program’ to ‘here is what our AI program is worth, and here is the evidence.’ And that is the only AI conversation that matters in 2026.

AI ROI in 2026

This year, AI ROI belongs to the organizations that treat AI as an operating discipline. The companies generating compounding returns are the ones that start with a defined business problem, measure from a real baseline, redesign workflows around the outcome, and govern every initiative with clear ownership and financial accountability.

That is where separation happens. One group keeps funding pilots, collecting activity metrics, and waiting for value to appear. The other builds repeatable systems that produce measurable gains quarter after quarter.

As Viktor Chekh, CEO of Sombra, puts it:

Organizations that answer that question with precision will invest confidently and grow their advantage. Those that cannot will continue to spend time, budget, and leadership attention—without generating lasting returns.

If your team wants to define AI success in financial terms, build the right measurement system, and launch with governance that holds up in production, that work starts in Sombra’s AI Lab.